K

@kaboroevich

3/16/2026, 5:46:00 AM

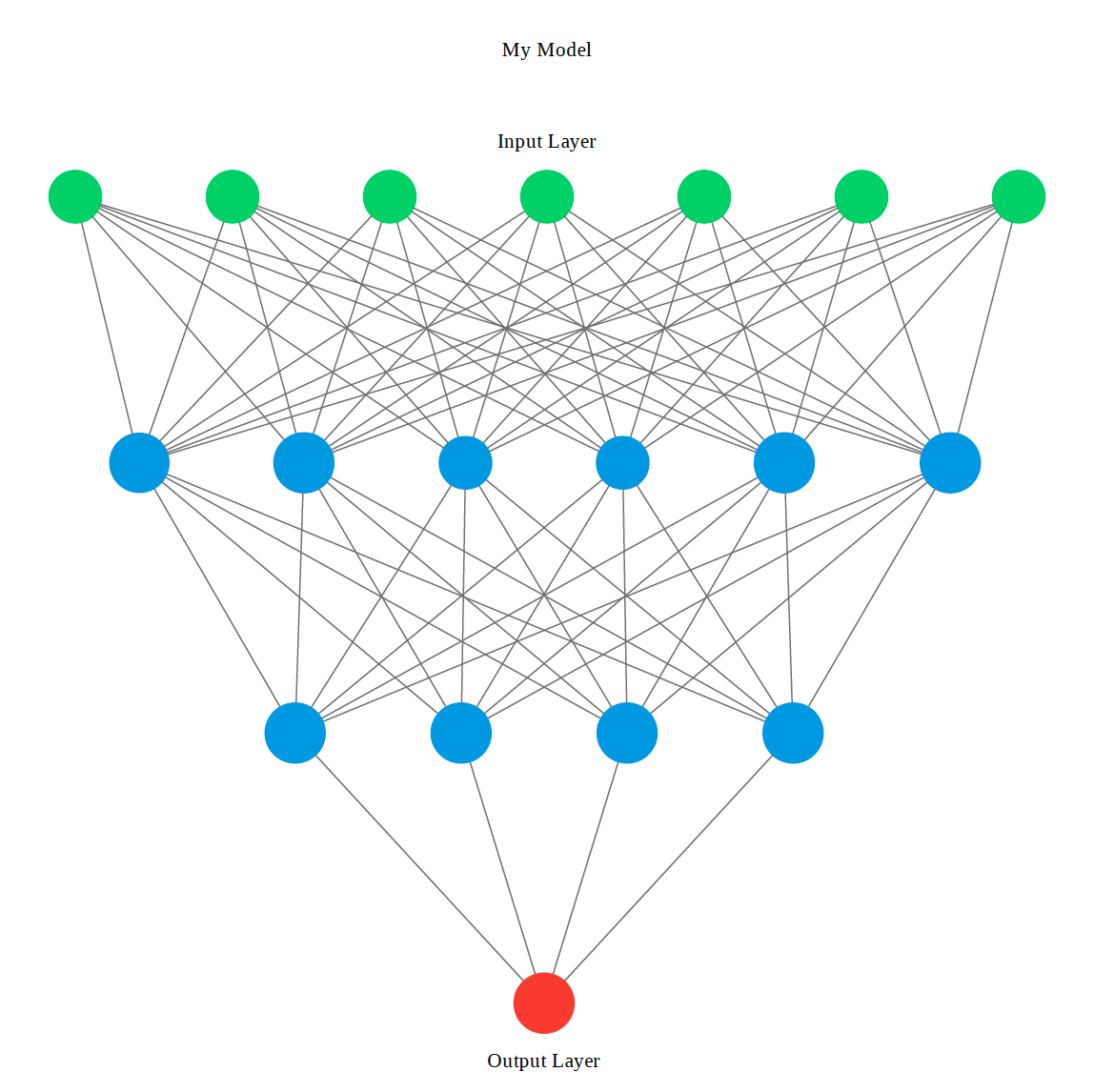

Just finished a deep dive into the Transformer architecture internals. The attention mechanism is still one of the most elegant ideas in ML. Here's my new video tutorial breaking it down from scratch with code: [link] Thread 🧵👇

0

No comments yet. Be the first!